The AI Factory Grind

This is a continuation of the saga from my last post: I Upgraded My AI Factory

I built Seneschal as a way step lightly into the world of Harness Engineering and AI Orchestration by building my own factory. I hired Claude to work in my little factory and I've been using it for my personal projects for a few weeks. I have some insights I'd like to share on the process as I iterate and improve the system.

The Stripe Inspiration

I was inspired to go down this path after reading about Stripe's Minions and their AI pipeline. The fact that they handle over 1300 PR's a week was impressive and while I was obviously not going for the same scale, I wanted to see if I could apply this concept at a smaller level to learn more about the process because 1) building things is fun and 2) I feel like this represents the fundamental shift in software engineering we've all been warned about1.

One important distinction I didn't mention much in my previous posts (and something that's become much more apparent as I've developed Seneschal) is that Stripe was uniquely positioned for such a process to be successful. They already possessed an incredibly well-tested codebase with a massive amount of regression architecture. Their own developers would've had a hard time breaking anything and so slotting AI into that process resulted in some serious gains.

As I have found, my codebases and small side-projects are in no-way up to the level of Stripe's (obviously) and thus I have found it more difficult to create a reliable pipeline that can one-shot features with little follow-up. This doesn't mean it's impossible, dear reader, no no. What it means is that there's a lot of effort that needs to go into building solid deterministic guardrails into my projects.

Some Lessons

You Really Need to Define Your Feature

The majority of the failures in my pipelines was my own laziness (or even just overconfidence in the AI) in writing detailed instructions for what I wanted. I'd have a pipeline marked as ready, go pull down the PR and look at the code, and realized it had left out something, or made an odd decision, or built something really unpolished. In almost every case when I went back and looked at my original task definition, I was being far too succinct and had left out critical details. I left out explanations on what parts of the system would need to be looked at, neglected to mention possible side-effects, and was overall too generic with what I wanted in the final feature.

This can be improved on in a number of ways:

- Simply writing more. I can structure my task definition a lot better.

- Writing and providing relevant documentation. I ran a task to integrate a third party AI into my app and provided it a link to the API's

llms.txtdocumentation file. The results were incredible. I implemented a "Code Map" tool in Seneschal that analyzes the codebase and creates "modules" and a file tree. This can be used during task creation to highlight specific sections of the app. - Better feature-definition resources. If I could create mockups, wireframes, etc and give the pipeline access to them (via API or MCP, etc) then this could greatly improve the quality.

- Tests. This is probably the most important but these pipelines lend themselves to test-driven-development. Building a robust test suite into your app is not optional and having a way to define tests for the task before it's executed would be a huge game changer for reliability as the AI could be instructed to continue working until these tests pass.

The Cost of Iteration Can Be Hard To Calculate

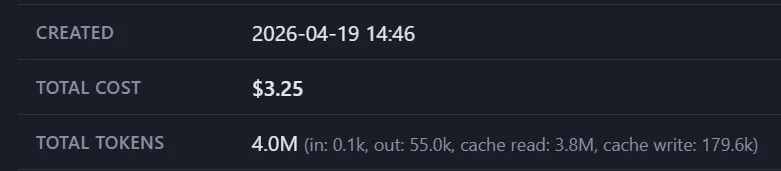

Answering "how much has my automation software cost me" is pretty easy. In Seneschal I leverage Claude Code's JSON output that shares detailed expense information so when a run completes I know exactly what it cost:

But that's not the whole picture. About 60% of the time I need to follow-up with more work. This takes the form of manual one-off Claude Code sessions and (shockingly) manually writing/fixing code. Non-orchestrated Claude usage is trackable, of course, but I have to admit I'm not very careful with keeping an eye on how many turns and tokens it took to shore up a feature. I'm definitely not good at tracking how much of my time it took.

I do take what I learned from these extra-sessions and go back to my Seneschal workflow to tweak skills and add gates that will, hopefully, improve the output of the next feature and this represents the cost of iterating on my harness2 and it's a cost that can be a bit difficult to calculate.

Some Wins

Building Things That Would Never Exist

This is true of AI in general but I've found a great deal of success in building things that I'd never have the time or energy to otherwise to tackle. I've dialed in my workflow quite a bit for my SuperTextAdventure project and while that is purely for my own entertainment at the moment I'm seeing features come to life because I could simply define a goal and set my workflow loose.

I wrote my own personal budgeting app and it didn't have any reliable banking API connected (I was just manually uploading my bank's CSV files). A friend recommended Teller.io and after some research decided it would be a prefect fit for me. I setup the needed authentication then handed the whole thing off to a Seneschal workflow and wouldn't you know it - a perfect one-shot implementation. I pulled down the PR, tested it and ended up merging it without a single change because it worked exactly as I'd hoped. I don't think I ever would've taken the time to do this work as I already had a (crappy, but low-effort) solution.

I've also spun up some really fun one-off projects that allowed me to experiment or learn new things.

Getting a "Feel" For AI

When I was growing up it was a skill to "know how to Google something" because people who didn't grow up with computers didn't innately know what to type to find what they needed. I, however, being of a younger persuasion at the time spent some formative years using Google and became quite adept at using it as a tool. It became a natural skill.

Now, with AI, there's plenty of ways to use it incorrectly (FAR more so that there was with Google) and I think for those of us that have a lot of ingrained habits and pre-conceived ideas on how things should work there's a lot of mental re-wiring that's needed to find the proper boundaries for using AI in software environment. Building something like this automation harness has, I believe, drastically improved my relationship and experience with AI. It's helping me gain some of that innate sense for how to best utilize its capabilities (and understand what they are).

What's Next?

I will continue to develop Seneschal. It's a fantastic playground to experiment with AI automation and learn new things. Also when I read about a new skill or strategy I have a place to test it for real.

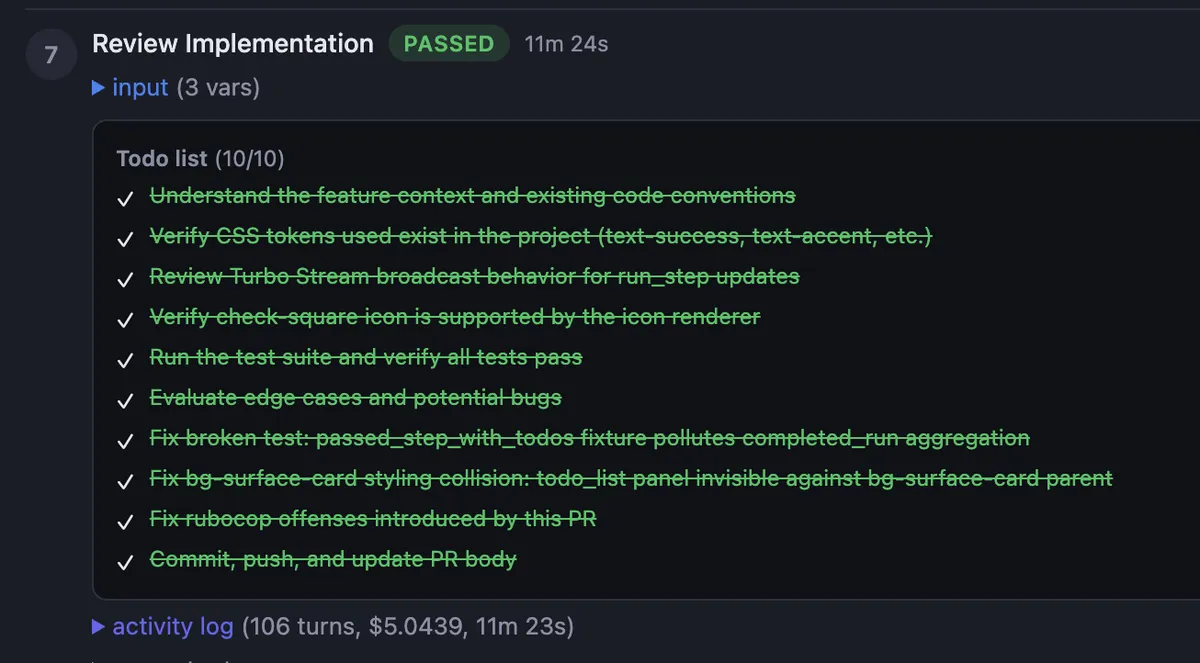

I've recently added a "Review" skill that runs after the implementation has been completed that goes deep into what was done (with fresh context) and improves/fixes things before the CI checks run. This takes more time/tokens but has drastically improved the output of a lot of features.

Another minor addition that I really like is parsing the TodoWrite calls from the Claude JSON and displaying that in the UI as the job runs:

Conclusions

Always keep building. Keep learning. LLMs are here to stay and people are always finding new ways to use them to make things better and life easier.

I'm always happy to chat, shoot me an email at: rick [at] makethingswork [dot] dev

-- Rick

I'm referring to the whole "AI will take our jobs" theory that's been around since day one of ChatGPT. I've always said that I wasn't scared of AI taking my job, but rather of people who think AI can take my job. I always anticipated that the reality would be somewhat less drastic and (again this is my opinion) I think Harness Engineering is that reality. Product/Business owners can't ignore the value of rapidly generated AI code, but they also can't ignore that it's not anywhere near reliable enough on its own.↩

That sounds incredibly dirty to me.↩