I Upgraded My AI Factory

This is a continuation of the saga I started in my previous post: https://blog.makethingswork.dev/congrats-ai-has-a-new-job-for-you/

I Built An AI Orchestrator

- Yes, there are already thousands out there.

- Yes, mine is simple.

- Yes, I'm using straight Rails.

- Yes, it only supports Claude Code and Github.

These are realities I was aware of and decisions made intentionally. I'm a fan of simplicity and I tend to learn the most by doing things myself. Even in this case, where I let Claude drive development, I've learned a lot about this process.

Seneschal

After my tinkering in my last article I read more about Stripe's Minions and I thought "I should try to make this on my own!".

I built a tool called Seneschal[^1] that provides a lightweight wrapper for AI orchestration. The main goal was to be able to mix AI steps with deterministic steps that verify what the AI is doing - and run it on my Raspberry Pi at home. You can watch an overview video on that site if you're so interested but it's a Rails app that runs claude and gh commands via shell executions and monitors the output. It uses this output to determine how to advance across a user-defined workflow. The end result is a PR with a (hopefully) one-shotted feature ready to review.

NOTE: This is not an advertisement for my app. The app is meant as an educational example and helps me talk about this subject with a lot more knowledge than I'd otherwise have. If you want to use it, please feel free but know it was built with my needs in mind. I currently have this running at home on my Raspberry Pi 5.

Determinism

I touched on this in the last post but the BIG glaring problem with generative AI is that it is probabilistic. By its very nature we can never trust it to be 100% accurate. That said, current-day models' ability to generate functional code is really impressive. "Good enough" is probably the general term I'd use. It'll never impress a computer scientist but if it can build an application and start saving time/money then there's a measurable win in there.

What flipped the switch in my head was reading about Stripe's Minions and how they combine AI processes with deterministic steps to ensure there's a minimal amount of cruft. These steps take many forms and will undoubtedly vary based on your project but in Seneschal I created an AI node that would generate a draft pr, then a simple node that ran gh and verified that the PR was created appropriately. I also created a bash script that takes the AI's implementation Markdown and ensures that certain sections are present before passing it off to the actual implementation skill.

AI wrapped in deterministic guardrails looks like a productive future.

Highlights

I'm not going to give a full run-down of what Seneschal does but I'm going to focus on a few things that I've already found immensely useful in my personal development.

Steps That Focus on Fixing Things

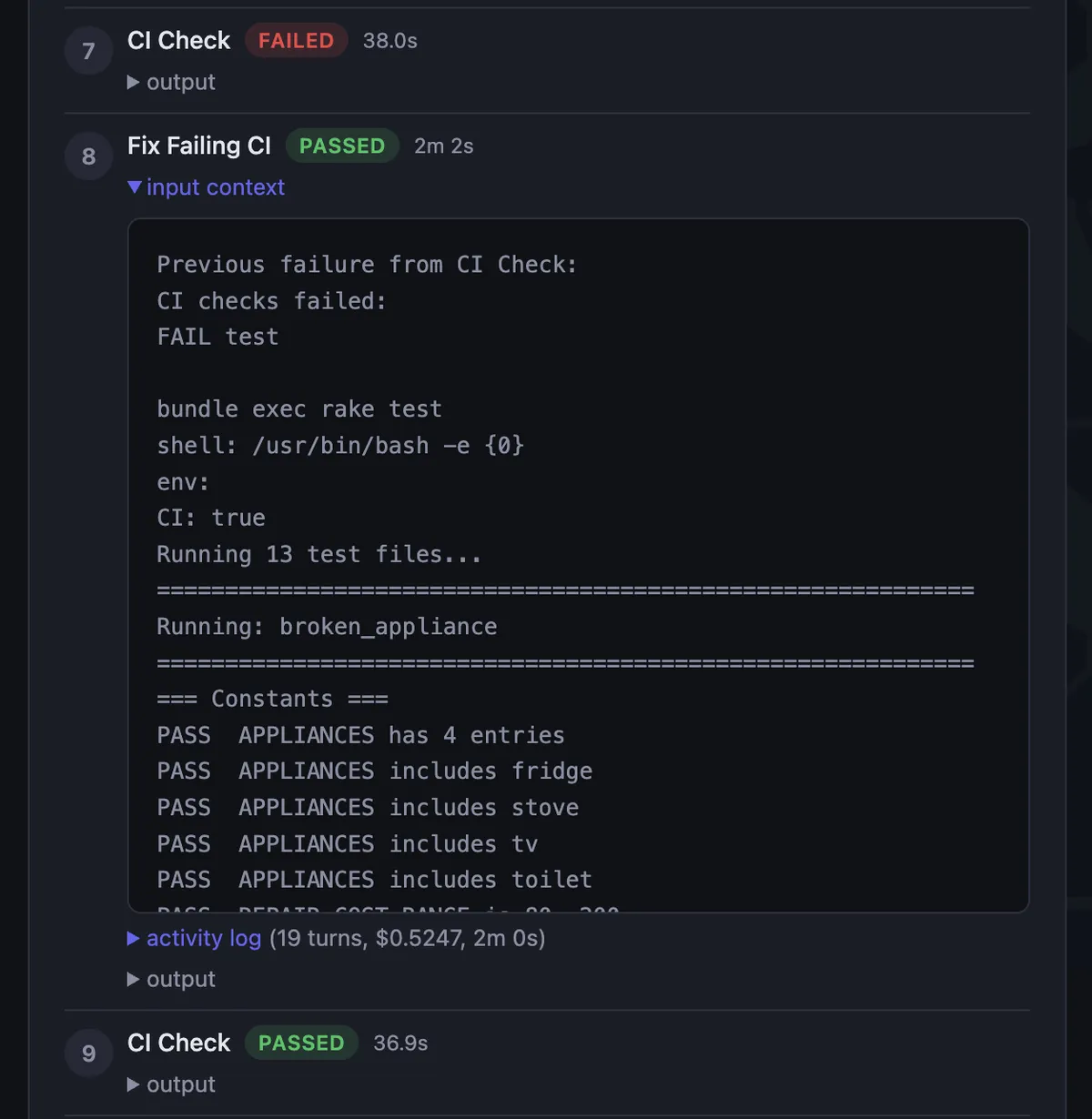

I wrote a skill that implemented a feature based on the implementation plan generated by another skill. This worked well! I added a step that, when the implantation was done, would monitor the status of the Github CI checks for the project.

When the checks would fail, my original approach was to pass the error back to the Implementation skill and have it try again. What I found was that this took FOREVER and resulted in a lot of extra churn on the part of the LLM. So instead, I created a separate step with a specialized skill that ONLY focuses on fixing the errors from the CI failures. It's instructed to only gather the needed context. The CI step, if it fails, can be instructed to inject this step into the workflow and pass the failures to it. It now runs much faster and does a nice job of cleaning up CI failures.

Easy Ideation

What's so great about this is that I can easily type out my idea. I can be as detailed or as succinct as I want. I can ask Claude to format my idea for me and then just hit go and before long I have a PR to review. This may seem obvious but this is where orchestration (if well-built) can add a lot of value outside of the engineering world. Product Managers can be given tools like this that generate workable features that can be immediately reviewed and tested.

On a personal level this allows me to build projects that would never have been built otherwise. And I think that's pretty cool.

Composability

The way the app works is very versatile. This is true of most orchestration platforms but it's a key component.

- I can easily add an MCP node and wire it up to an external service that pulls documentation.

- I can write a step that triggers a deploy of the branch to a hosting service and trigger remote Claude execution that checks some specific functionality that can only be tested with the app running in a specific environment.

- I can implement a Human-in-the-Loop step that sends me an email and requires me to manually approve or disapprove the continuation of the workflow

Iterating in this manner becomes the majority of the work involved.

Easy Cost Tracking

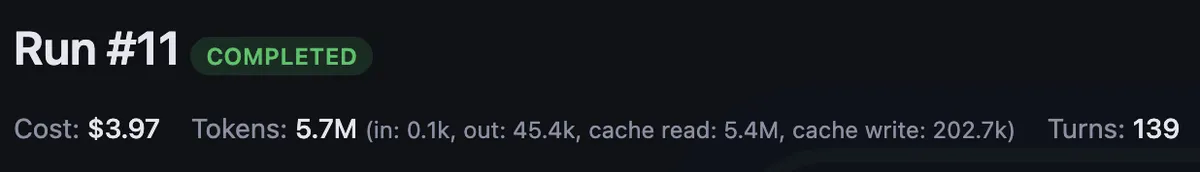

An easy metric for how well this all "works" is how well-written and functional the requested features are at the end of the process.

However if you've ever done an intense coding session with an agentic-coding agent you might look up and have no idea how exactly you got here or how many prompts you used.

With an orchestration tool like this (and the SUPER handy streaming JSON output from the claude cli tool) you can capture data like this:

This gives us something really easy to point at and measure and improve.

Note About 3rd Party Anthropic Policy

It's typical that as I built Seneschal Anthropic changed it's policy on third-party harnesses. However, after looking at it and their reasons I don't think it's all that big of a deal. It's not surprising at all that the pricing would change, I think it was inevitable. I like Claude Code for well it works and how useful it's structured output is. Also, it can also be pointed at different models and so I'm not going to concern myself with trying to support other coding tools - I highly anticipate that they'll all make similar changes to their policies now that Anthropic has taken that step.

Conclusions and Next Steps

There's a lot of room for improvement here but I think that's going to slowly become the role of a software engineer. We'll spend less time being task-oriented and more time optimizing the automation workflows. My tool isn't going to change the world but it'll continue to be a vehicle for me to explore my own approach to this way of developing.

My next steps will be investigating ways to make code searching more efficient. Stripe has built a ton of tooling around context engineering[^2] and ways to provide their Minions with just the right amount of context needed to get the job done.

I'll keep you posted. As always, I love hearing from you. Send me a message: rick [at] makethingswork [dot] dev

-- Rick

[^1] I wasn't aware it was a word either. Claude suggested it and I loved it. https://en.wikipedia.org/wiki/Seneschal

[^2] Fantastic guide on Context Engineering: https://blog.bytebytego.com/p/a-guide-to-context-engineering-for